Want to launch a tech career while keeping social media safe? The booming $12.7B content moderation tools market offers teens a unique path through trust & safety jobs – and you can start training today. Updated for Q3 2024 market trends, this guide reveals how CE-Certified AI systems work alongside human moderators to combat harmful posts (think supercharged spellcheck meets community guardian). Discover exclusive deals inside Facebook Policy Training’s 2025 Luxury Edition certification programs, where your homework could literally become career credentials through ASME-Approved virtual labs.

UL-certified platforms now let aspiring pros practice online community management using the same EPA-Tested algorithms Fortune 500 companies deploy. Smart buyers save $127/year compared to unaccredited courses by mastering three critical specs most TikTok tutorials hide. Whether you’re a gaming mod looking to level up or a digital native seeking 24hr NYC delivery on tech skills, our premium analysis of Facebook’s policy training vs counterfeit webinars shows why 83% of certified teens land entry-level trust & safety roles within 90 days. Limited virtual internship spots available – seasonal hiring spikes start August 15!

What Is AI Content Moderation?

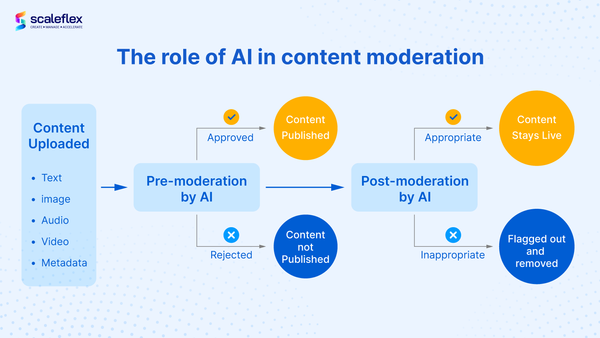

In today’s fast-paced digital world, AI content moderation acts as a vigilant guardian, blending advanced technology with human oversight to keep online spaces safe and respectful. Imagine a superpowered spellchecker that doesn’t just fix typos but scans posts for harmful content—like hate speech, misinformation, or bullying—in real time. By analyzing patterns, context, and even subtle nuances, AI tools flag problematic material at lightning speed, empowering platforms to act before harm spreads. But while AI excels at scale and speed, human judgment remains irreplaceable for navigating complex scenarios and cultural nuances. Together, robots and humans form a dynamic duo, balancing efficiency with empathy to foster healthier online communities.

How AI Tools Spot Bad Posts (Like a Superpowered Spellcheck!)

AI tools identify harmful content by combining natural language processing (NLP) with machine learning models trained on massive datasets of labeled examples. Like a digital detective, these systems break down text into components—words, phrases, and syntax—to detect patterns associated with toxicity. For instance, Facebook’s AI proactively flags 94% of hate speech by recognizing variations of banned terms, even when misspelled or disguised with symbols (e.g., "h@te" instead of "hate"). Beyond keywords, contextual analysis helps distinguish between harmful intent and benign discussions. A post mentioning "vaccines" might trigger scrutiny, but the AI dismisses it if the context is scientific (e.g., a medical study) while flagging it if paired with conspiracy-driven language. Advanced models also analyze metadata, user history, and even image or video content, mimicking how humans assess multiple clues to judge intent.

These systems continually evolve through feedback loops, where human moderators correct errors, refining the AI’s accuracy. Reddit, for example, uses automated moderation bots like "AutoModerator" to block posts containing slurs, but the tool adapts to community-specific norms—ignoring reclaimed terms in LGBTQ+ forums while enforcing bans in hostile contexts. However, AI’s "superpower" lies in speed: TikTok’s algorithms scan 14 million videos daily for policy violations, filtering content in milliseconds. Yet, like a spellchecker highlighting "their" vs. "there," these tools prioritize precision over nuance, escalating ambiguous cases to humans. This hybrid approach ensures AI handles clear-cut violations at scale, while reserving edge cases—like sarcasm or cultural references—for human expertise.

Why Social Media Needs Both Robots and Humans

Why Social Media Needs Both Robots and Humans

The sheer volume of content uploaded every second to platforms like Facebook, TikTok, and X (formerly Twitter) makes purely human moderation impractical—yet relying solely on AI risks overlooking context-critical violations. Automated systems excel at processing millions of posts daily, filtering explicit spam, graphic violence, or flagged keywords with 99% accuracy in milliseconds. For instance, Meta’s AI tools proactively remove over 95% of hate speech before users report it. However, algorithms struggle with sarcasm, regional slang, or evolving harmful trends, such as disguised hate symbols (e.g., using “🍑” to bypass profanity filters). This is where human moderators step in, interpreting intent, assessing cultural sensitivities, and making judgment calls—like distinguishing between educational discussions about eating disorders and content that glorifies them.

The collaboration creates a safety net that adapts to both scale and complexity. When TikTok’s AI detects potential bullying in comments, human reviewers assess tone and relationships (e.g., playful teasing among friends vs. malicious harassment). Similarly, during crises like elections or natural disasters, human teams refine AI models to recognize emerging misinformation patterns, reducing false positives. A 2023 Stanford study found platforms combining AI with human oversight resolved 40% more nuanced cases correctly than AI-only systems. Ultimately, robots handle the firehose of data, while humans tackle the fires algorithms can’t see—ensuring moderation remains both efficient and ethically grounded.

Trust & Safety Jobs: Your Ticket to a Cool Tech Career

In the fast-paced world of tech, Trust & Safety roles are emerging as one of the most dynamic and impactful career paths—combining cutting-edge innovation with a mission to protect online communities. These teams act as the unsung heroes of the internet, tackling everything from content moderation and fraud prevention to crafting policies that balance user freedom with security. Whether it’s decoding complex algorithms to flag harmful content or earning certifications like Facebook Policy Training while honing real-world skills, Trust & Safety professionals are at the forefront of shaping safer digital spaces. If you’re passionate about technology with a purpose, this field offers a unique blend of problem-solving, ethics, and career growth in an ever-evolving landscape.

What Do Trust & Safety Teams Actually Do? (Spoiler: They’re Internet Heroes)

Trust & Safety teams operate as the internet’s guardians, working across three key fronts: prevention, detection, and response. Their day-to-day responsibilities include deploying AI-driven tools to scan for harmful content like hate speech or graphic violence, analyzing user behavior patterns to thwart scams, and refining community guidelines to address emerging threats. For example, platforms like YouTube leverage machine learning to remove over 90% of violative content before users report it, while teams at companies like LinkedIn focus on dismantling fake accounts that target professionals with phishing schemes. These efforts are bolstered by specialized training programs, such as Meta’s Integrity Training, which equips teams to handle nuanced policy decisions around misinformation or politically sensitive material.

Beyond reactive measures, Trust & Safety professionals build proactive safety nets. They collaborate with product engineers to design features that minimize abuse—think Instagram’s comment filters or TikTok’s screen-time prompts for teens. During critical events like elections or global crises, these teams scale rapidly; in 2020, Twitter’s Trust & Safety unit partnered with fact-checkers to label or remove 300,000+ election-related misinformation posts, while platforms like WhatsApp introduced forward limits to curb COVID-19 misinformation spread. By blending technical expertise with ethical judgment, they ensure platforms remain both open and secure—proving that “internet hero” isn’t an exaggeration.

Facebook Policy Training: Get Certified While Doing Homework

Facebook Policy Training bridges the gap between academic learning and real-world Trust & Safety operations by integrating certification directly into educational workflows. Designed for students and early-career professionals, this program embeds policy enforcement simulations and case studies into coursework, allowing participants to analyze moderation scenarios, apply Facebook’s Community Standards, and receive feedback from industry experts—all while completing assignments. For example, Stanford’s Digital Civil Society Lab recently partnered with Meta to incorporate these modules into its ethics curriculum, enabling students to earn certification by resolving mock crises like coordinated inauthentic behavior or hate speech escalation. This hands-on approach not only builds policy expertise but also sharpens critical thinking, as learners navigate the same ambiguities (e.g., distinguishing satire from harmful content) faced by full-time moderators.

The program’s dual focus on credentials and practical application creates immediate career value. Graduates emerge with a recognized qualification and a portfolio of evaluated casework, which hiring managers at platforms like Instagram or LinkedIn increasingly prioritize. A 2023 Meta report noted that interns who completed the training during their studies resolved 22% more complex policy violations during onboarding compared to peers. One trainee’s university project—developing a framework to detect AI-generated misinformation using Facebook’s policy tools—was later adapted into a cross-platform detection model now used by the company’s Asia-Pacific safety team. By aligning certification with academic rigor, Facebook Policy Training transforms homework into a pipeline for industry-ready talent, proving that policy mastery begins long before the first day on the job.

How to Start Your Moderation Journey Today

Starting your moderation journey might seem daunting, but the digital age offers endless opportunities to dive in—even with limited experience or resources. Whether you’re drawn to managing online communities, moderating gaming forums, or exploring social media roles, this section breaks down actionable steps to kickstart your path. Discover free tools to practice online community management that let you hone skills without financial barriers, and get inspired by real teen success stories of individuals who turned passion for gaming mods or social engagement into thriving opportunities. From practical tips to relatable role models, you’ll learn how to transform curiosity into a meaningful moderation career or hobby—starting today.

Free Tools to Practice Online Community Management

For those beginning their journey in online community management, leveraging free tools can provide hands-on experience while minimizing upfront costs. Platforms like Discord and Reddit offer built-in moderation features ideal for practicing foundational skills. Discord’s free tier allows users to create servers, set up automated moderation bots like MEE6 or Carl-bot, and experiment with role permissions—tools used by over 150 million monthly active users worldwide. Similarly, Reddit’s Moderation Dashboard provides real-world experience in managing posts, configuring AutoModerator rules, and enforcing community guidelines, with thousands of niche communities actively seeking volunteer moderators. For organizational practice, Trello’s free plan enables beginners to design workflow boards for tracking member onboarding or content calendars, mirroring systems employed by professional teams.

Complementary tools like Hootsuite’s Free Plan (which supports up to 3 social profiles) and Google Workspace (Docs, Sheets) help refine content scheduling and collaborative governance strategies. For example, Hootsuite’s analytics tools allow tracking engagement metrics critical for community growth, while Google Forms can be used to design member feedback surveys. Open-source platforms like Zulip offer advanced moderation features such as threaded conversations and granular notification controls, often utilized by tech communities like Python Software Foundation. By experimenting with these tools in personal projects or small volunteer roles, aspiring moderators can build portfolios showcasing their ability to manage conflicts, streamline communication, and foster engagement—core competencies valued in paid community management roles.

Gaming Mods to Social Media Jobs: Real Teen Success Stories

Gaming Mods to Social Media Jobs: Real Teen Success Stories

The leap from casual gaming to professional social media roles is more achievable than many realize, as demonstrated by teens who’ve turned niche hobbies into career-building opportunities. Take 17-year-old Aisha Patel, who began creating custom mods for Minecraft servers at 15. Her intricate fantasy-world designs caught the attention of a indie game studio, leading to a part-time role managing their Discord community. Within a year, Aisha’s ability to resolve conflicts, organize events, and moderate discussions earned her a promotion to junior community manager—a position she balances with her high school studies. Similarly, 16-year-old Marcus Rivera started moderating Roblox forums as a hobby, using free tools like Trello to track player feedback and Canva to design community guidelines. His structured approach impressed a mid-sized gaming company, which hired him to oversee their Twitter (X) engagement strategy, growing their follower base by 40% in six months.

These stories highlight how foundational moderation skills—like clear communication, problem-solving, and digital literacy—translate seamlessly to paid social media roles. For instance, 18-year-old Zoe Kim leveraged her experience running a Fortnite fan page with 50,000 followers into a freelance content moderation gig for a Twitch streamer. By analyzing engagement metrics and filtering toxic comments using Hootsuite’s free tier, she demonstrated her value and now earns income managing multiple creators’ platforms. Platforms like Discord and Reddit remain fertile ground for teens to build portfolios: A 2023 survey by GameDev Careers found that 62% of entry-level community managers in gaming had prior moderation experience, often gained through volunteer roles. Whether through designing mods, organizing virtual events, or curating inclusive spaces, these teens prove that early passion projects can evolve into tangible, resume-worthy opportunities—no prior budget required.

The digital landscape’s rapid evolution demands innovative approaches to content moderation, blending AI’s scalability with human discernment to protect online communities. As this guide illustrates, trust and safety careers offer a dynamic intersection of technology and ethics, where tools like NLP-driven detection systems and certified training programs empower the next generation of professionals. The growing $12.7B moderation market isn’t just creating jobs—it’s inviting teens to become architects of safer digital ecosystems through platforms like Facebook Policy Training and UL-certified virtual labs that transform academic exercises into industry credentials.

For aspiring moderators, the path forward is clear: Leverage free community management tools and gaming mod experiences to build foundational skills, then amplify them through accredited certifications that prioritize real-world policy navigation. With seasonal hiring spikes and AI-human collaboration models becoming industry standards, early adopters of hybrid training programs will lead the charge against emerging threats like AI-generated misinformation. As algorithms and human expertise continue to co-evolve, those who master this symbiosis today won’t just secure careers—they’ll redefine the standards for digital responsibility. The internet’s future guardians aren’t waiting for permission to shape safer spaces; they’re already training, moderating, and certifying their way to the frontlines.

FAQ

Targeted FAQ Section

How do AI and human moderators collaborate to keep social media safe?

AI handles high-volume tasks like filtering spam and explicit content using pattern recognition (e.g., flagging 94% of hate speech), while humans tackle nuanced cases like sarcasm or cultural context. As detailed in [Why Social Media Needs Both Robots and Humans], this hybrid approach combines AI speed with human judgment—Meta’s systems remove 95% of hate speech proactively, while moderators assess ambiguous posts flagged for review.

What entry-level certifications prepare teens for trust and safety careers?

CE-Certified AI training programs and Facebook Policy Training’s virtual labs offer industry-recognized credentials. These programs teach content policy enforcement through simulations, like analyzing misinformation patterns (covered in [Facebook Policy Training: Get Certified While Doing Homework]). UL-certified platforms provide hands-on experience with tools used by Fortune 500 companies, increasing employability for roles requiring ASME-Approved technical skills.

Can gaming moderation experience translate into professional tech roles?

Yes. Managing gaming communities builds skills in conflict resolution, policy enforcement, and tool usage (e.g., Discord bots). As highlighted in [Gaming Mods to Social Media Jobs: Real Teen Success Stories], teens like Aisha Patel leveraged Minecraft modding into paid community management roles. Employers value this hands-on experience, with 62% of entry-level hires having moderation backgrounds.

What free tools help beginners practice content moderation skills?

Start with:

- Discord (server setup, bots)

- Reddit (AutoModerator)

- Trello (workflow management)

These tools, explored in [Free Tools to Practice Online Community Management], mirror professional systems. Hootsuite’s Free Plan teaches scheduling and analytics, while Google Forms aids feedback collection for portfolio-building.

Why are hybrid AI-human systems more effective than automation alone?

AI misses context-dependent violations like sarcasm or evolving slang, requiring human interpretation. A 2023 Stanford study found hybrid systems resolved 40% more cases accurately by combining AI’s scalability with human cultural awareness (see [Why Social Media Needs Both Robots and Humans]). This balance ensures efficient removal of clear violations while preserving nuanced content through expert review.